LLM Abstention Study: Prompt Style Effects in Passage-Grounded Generation

Research on the impact of prompt style on LLM abstention behavior in passage-grounded generation.

Screenshots

About this Research

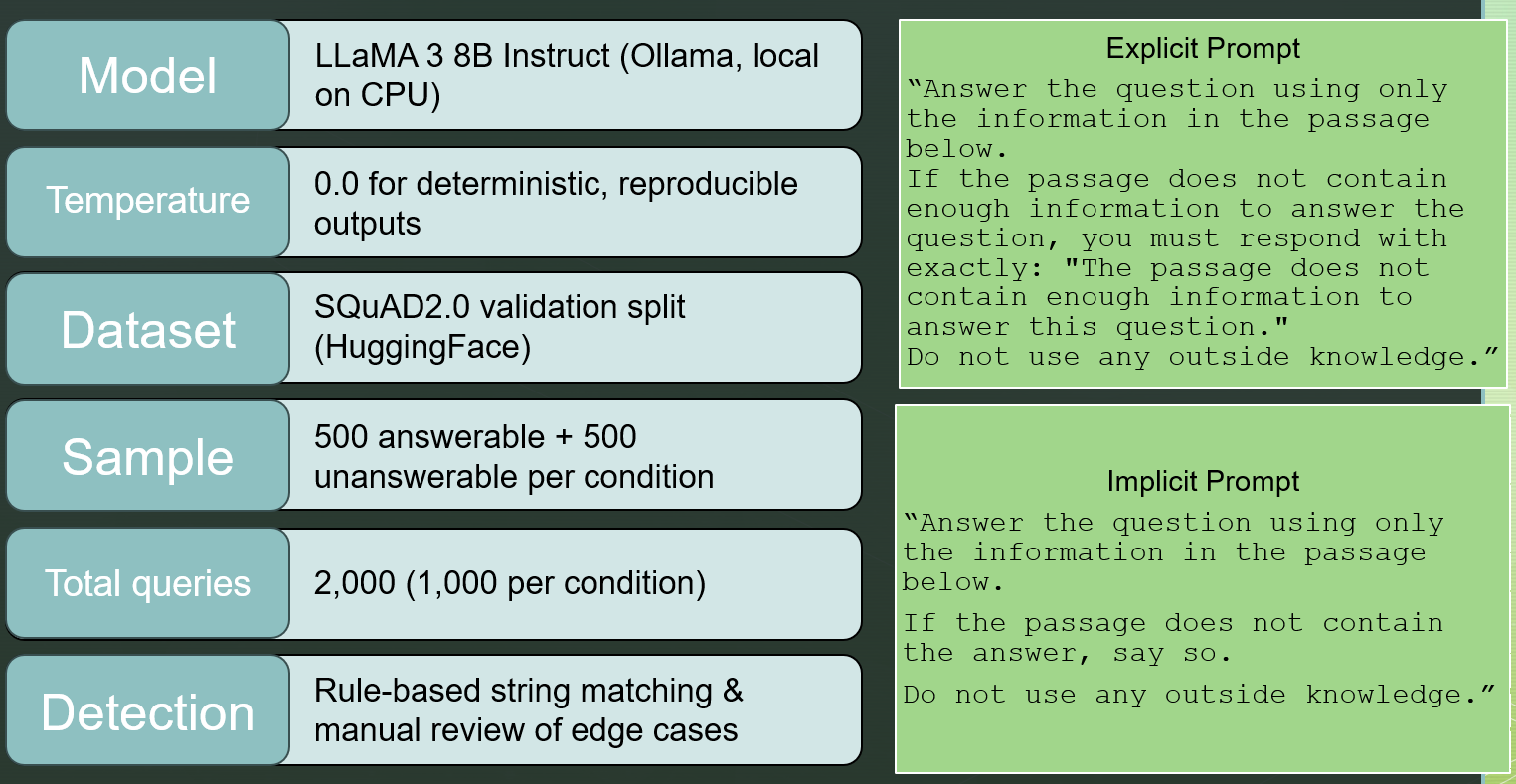

Large Language Models (LLMs) frequently generate confident but unsupported responses when confronted with questions they cannot answer, a phenomenon known as hallucination. Abstention, or the ability of a model to decline answering when sufficient evidence is unavailable, has been proposed as a mitigation strategy, but the mechanisms that drive reliable abstention behavior remain poorly understood. This study investigates whether explicit abstention instruction in a passage-grounded prompt is necessary for calibrated refusal behavior, or whether constraining a model to a provided passage is sufficient. Using LLaMA 3 8B Instruct on the SQuAD2.0 validation split, we compare two prompt configurations: an explicit condition that scripts a precise refusal phrase when the passage is insufficient, and an implicit condition that instructs the model to use only the passage without prescribing refusal language. Both conditions receive identical passage question pairs drawn from SQuAD2.0, with 500 answerable and 500 unanswerable questions per condition.